Next step in light microscopy image improvement

New deep learning architecture enables higher efficiency compared to widely used methods

It is the computational processing of images that reveals the finest details of a sample placed under all kinds of different light microscopes. Even though this processing has come a long way, there is still room for increasing for example image contrast and resolution. Based on a unique deep learning architecture, a new computational model developed by researchers from the Center for Advanced Systems Understanding (CASUS) at Helmholtz-Zentrum Dresden-Rossendorf (HZDR) and the Max Delbrück Center for Molecular Medicine is faster than traditional models while matching or even surpassing their images’ quality. The model, called Multi-Stage Residual-BCR Net (m-rBCR), was specifically developed for microscopy images. First presented at the biennial European Conference on Computer Vision (ECCV), the premier event in the computer vision and machine learning field, the corresponding peer-reviewed conference paper is now available.

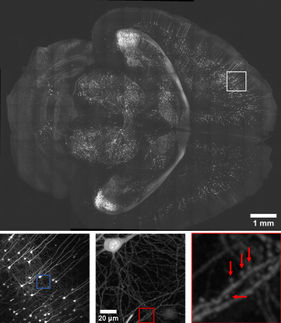

The new model gives a new twist to an image processing technique called deconvolution. This computationally intensive method improves the contrast and resolution of digital images captured in optical microscopes like widefield, confocal or transmission microscopes. Deconvolution aims to reduce blur, a certain type of image degradation introduced by the microscopic system used. The two main strategies are explicit deconvolution and deep learning-based deconvolution.

Explicit deconvolution approaches are based on the concept of a point spread function (PSF). One PSF basically describes how an infinitely small point source of light originating in the sample is widened and spread into a three-dimensional diffraction pattern by the optical system. That means: In a recorded (two-dimensional) image there is always some light from out-of-focus structures that produce the blur. By knowing the PSF of a microscopic system you can calculate out the blur to end up with an image that resembles the truth much better than the unprocessed recorded image.

“The big problem with PSF-based deconvolution techniques is that often the PSF of a given microscopic system is unavailable or imprecise,” says Dr. Artur Yakimovich, Leader of a CASUS Young Investigator Group and corresponding author of the ECCV paper. “For decades, people have been working on so-called blind deconvolution where the PSF is estimated from the image or image set. However, blind deconvolution is still a very challenging problem and the achieved progress is modest.”

As shown in the past by the Yakimovich team, using the “inverse problem solving” toolbox works well in microscopy. Inverse problems deal with recovering the causal factors leading to certain observations. Typically, you need a lot of data and deep learning algorithms to address this kind of problems successfully. Like with the explicit deconvolution methods, the results are higher-resolution or better-quality images. For the approach presented at the ECCV, the scientists used a physics-informed neural network called Multi-Stage Residual-BCR Net (m-rBCR).

Deep learning deployed differently

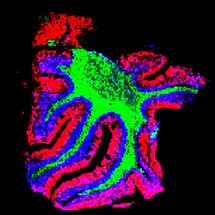

In general, there are two basic variants for image processing. It can either start with the classical spatial representation of an image or with its frequency representation (requiring a transformation step from the spatial representation). In the latter one, every image is represented as a collection of waves. Both representations are valuable. Some processing operations are easier to accomplish in one form and some in the other. The vast majority of deep learning architectures operate on the spatial domain . It is well suited for photographs. However, microscopy images are different. They are mostly monochromatic. In case of techniques like fluorescence microscopy they deal with specific light sources on a black background. Hence, m-rBCR uses the frequency representation as the starting point.

“Using the frequency domain in such cases can help create optically meaningful data representations – a notion that allows m-rBCR to solve the deconvolution task with surprisingly few parameters compared to other modern-day deep learning architectures,” explains Rui Li, first author and presenter at the ECCV. Li suggested to advance the neural network architecture of a model called BCR-Net that itself was inspired by a frequency representation-based signal compression scheme introduced in the 1990s by Gregory Beylkin, Ronald Coifman, and Vladimir Rokhlin (explaining the name of the BCR-transform).

The team has validated the m-rBCR model on four different datasets, two simulated microscopy images datasets and two real microscopy datasets. It demonstrates high performance with significantly fewer training parameters and shorter run-time compared to the latest deep learning-based models and, of course, it also outperforms explicit deconvolution methods.

A model tailored to microscopy images

“This new architecture is leveraging a neglected way to learn representations beyond the classic convolutional neural network approaches,” summarizes co-author Prof. Misha Kudryashev, Leader of the “In Situ Structural Biology” Group of Max-Delbrück-Centrum für Molekulare Medizin in Berlin. “Our model significantly reduces potentially redundant parameters. As the results show, this is not accompanied by a loss of performance. The model is explicitly suitable for microscopy images and, having a lightweight architecture, it is challenging the trend of ever-bigger models that require ever more computing power.”

The Yakimovich group recently published an image quality boosting model based on generative artificial intelligence. This Conditional Variational Diffusion Model produces state-of-the-art results also surpassing the m-rBCR model presented here. “However, you need training data and computational resources including sufficient graphical processing units which are much-sought after these days,” Yakimovich recalls. “The lightweight m-rBCR model does not have these limitations and still delivers very good results. I am therefore confident that we will gain good traction in the imaging community. To facilitate this, we already started to improve the user-friendliness.“

The Yakimovich group “Machine Learning for Infection and Disease” aims to understand the complex network of molecular interactions that is active after the body has been infected with a pathogen. The use of the new possibilities of machine learning is key here. Areas of interest include image resolution improvement, 3D image reconstruction, automated disease diagnosis, and evaluation of image reconstruction quality.